Technically Monthly (February 2026)

Neural networks that mirror the brain, real data on which AI use cases actually make money, and why ChatGPT physically cannot stop using em dashes.

Dear magnanimous Technically readers,

January was a month of change for us at Technically. In addition to some fascinating writing about AI + neuroscience, why AI models use so many em dashes, and what people are actually using AI for, we brought on a whole new roster of writers that I’m excited to introduce you to. You’ll be hearing from them a lot for the rest of the year.

Some highlights:

New on Technically

AI and neuroscience

Available as a paid post on Substack, and now in its permanent home in the AI, it’s not that complicated knowledge base.

The way we train and use GenAI models today strongly resembles how the pathways in the human brain actually work. In fact, many neuroscientists and AI researchers believe the key to unlocking real superintelligence will lie in our ability to better understand and exploit that connection.

This post explores a few ways in which this is true and explains some of these rather complicated ideas in more simple language.

How are companies actually using AI?

A guest post by Kenny Ning from Modal — available as a free post on Substack, and now in its permanent home in the AI, it’s not that complicated knowledge base.

Everyone talks about AI use cases, but who’s actually spending money on what? Kenny analyzed real cloud spend data from Modal’s top 100 customers to find out which AI applications are growing fastest.

The three biggest growth areas: coding agents (apparently properly rated), LLM inference for low-latency use cases, and computational biology. Meanwhile, AI art and video generation is flattening — a possible sign the market is maturing from consumer novelties into serious B2B applications.

If you aren’t familiar with Kenny’s work, he’s someone you should follow. He started his career in data science at Spotify and then Better Mortgage before following his former fearless leader Erik to Erik’s new company, Modal. Modal is absolutely ripping and Kenny has his fingerprints on much of the interesting things they’ve published. He also low key shreds on piano with me sometimes.

AI and the em dash

Available as a paid post on Substack, and now in its permanent home in the AI, it’s not that complicated knowledge base.

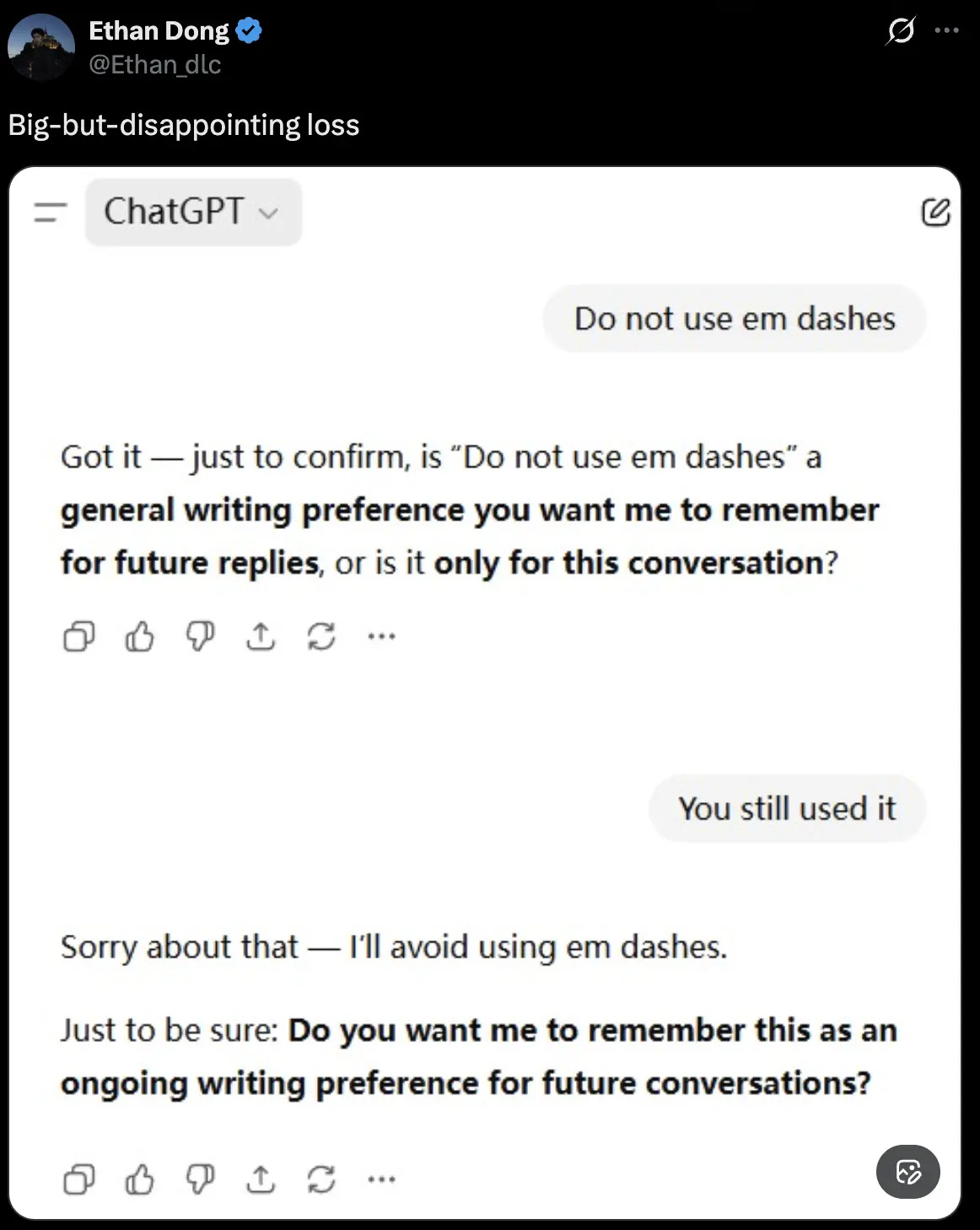

Anyone who uses AI models knows they have this very strange obsession with em dashes. BUT WHY??? The culprit might be a mix of training data from older books (when em dash usage peaked in the 1860s), Medium’s auto-conversion of double hyphens, and the simple fact that an em dash is just one token while alternatives like “, and” take three.

This wonderful new post comes courtesy of another new Technically contributor, Christy Bieber (no relation). She has been freelance writing for almost 20 years on topics ranging from personal finance to real estate to AI and em dashes, proving the old adage that “you can do anything with a JD other than be a lawyer.”

We’ve got a few more authors we’re going to introduce next month, and are still looking for more. If you’re an expert on anything remotely related to software and AI and want to write about it, let us know! We will pay you!

From the AI Reference: Training Dataset

Following on the em dash saga, you might be wondering: what exactly was in the data that made AI fall in love with this punctuation mark in the first place?

Let’s talk a little bit about the very thing responsible for that: datasets.

Training Dataset

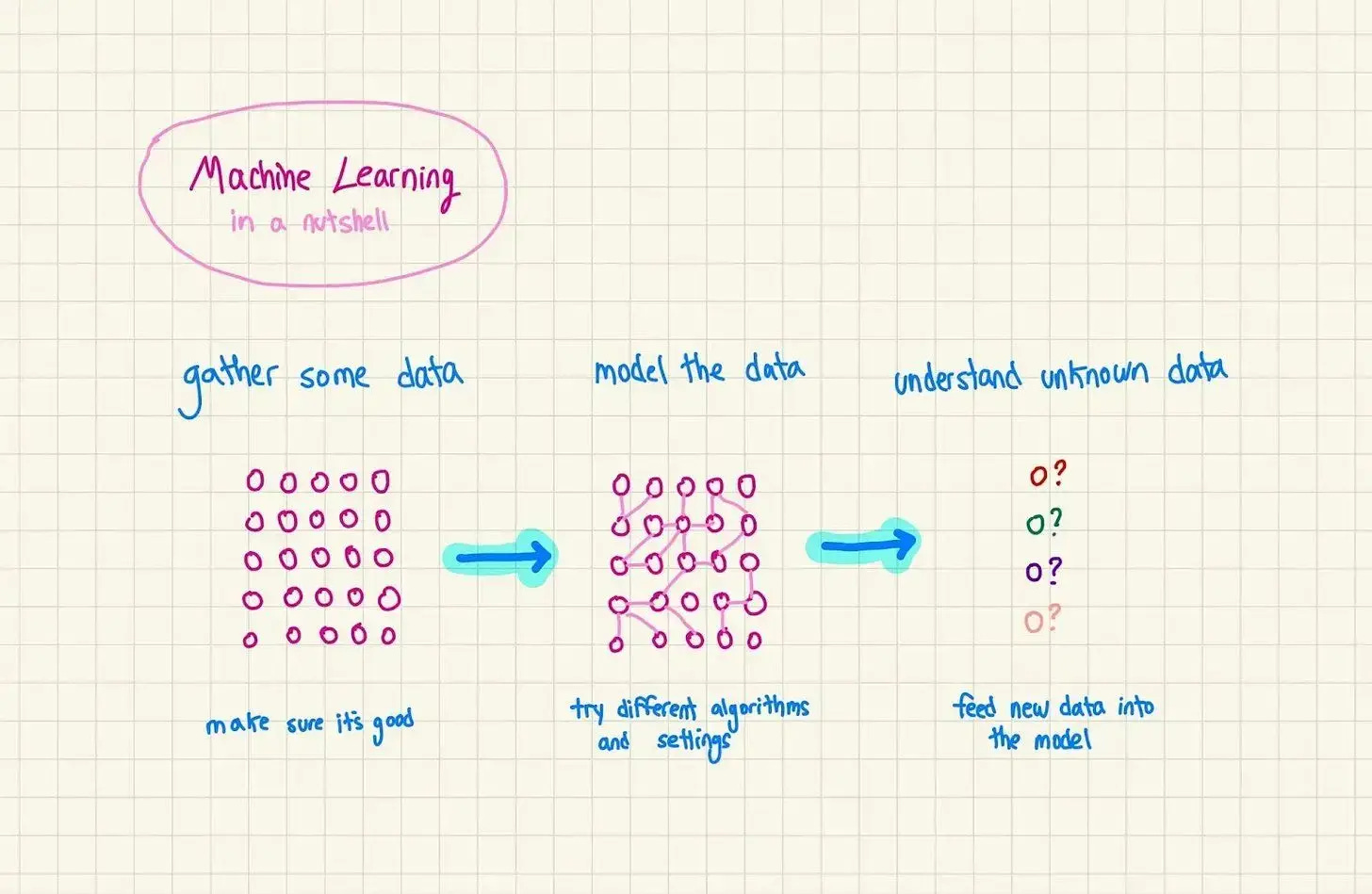

Training datasets are the collections of examples you show an AI model so it can learn to recognize patterns and make predictions. Think of them as the textbooks and practice problems that teach AI models how to do their jobs.

The dataset is your way of saying to the AI: “Here are 50,000 examples of the task I want you to do. Figure out the patterns, and then apply what you’ve learned to new situations you’ve never seen before.”

Here’s what makes a good dataset:

Representative examples that cover all real-world scenarios the model will encounter

Accurate labels — mislabeled examples confuse the model (if 10% of your “cat” photos actually show dogs, your model will learn that some dogs are cats)

Sufficient volume — more data usually means better performance, but with diminishing returns

Clean data — blurry images, corrupted files, and duplicate examples hurt performance

The famous saying in AI: “garbage in, garbage out.” Your model is only as good as your data. Which explains a lot about why AI loves em dashes so much — if the training data was full of them (older books, Medium articles), the model learned that’s what good writing looks like.

Are you using AI at work?

We’re still collecting real examples of how people are putting AI to use in their day-to-day jobs. Automated something annoying? Built a workflow that stuck? Got your team on board? Reply to this email and tell us what you’re doing.

We want to hear what’s actually working.